Short version: llms.txt is a plain-text file you put at the root of your website that tells AI systems what your site is about, what matters most on it, and where to find clean versions of your best content. It's not a ranking signal. It's a discovery signal — built for ChatGPT, Claude, Gemini, Perplexity, and the wave of AI agents that are increasingly how customers find local businesses.

Adoption is early. As of current BuiltWith tracking, around 193,000 live sites expose an llms.txt file. Independent CDN log audits show AI crawlers aren't reliably requesting it yet. But Anthropic, Stripe, Cloudflare, Vercel, and Perplexity all publish one. WordPress via Yoast and Webflow added native support. The cost of adding it is near zero. The cost of missing it when it becomes table stakes is not.

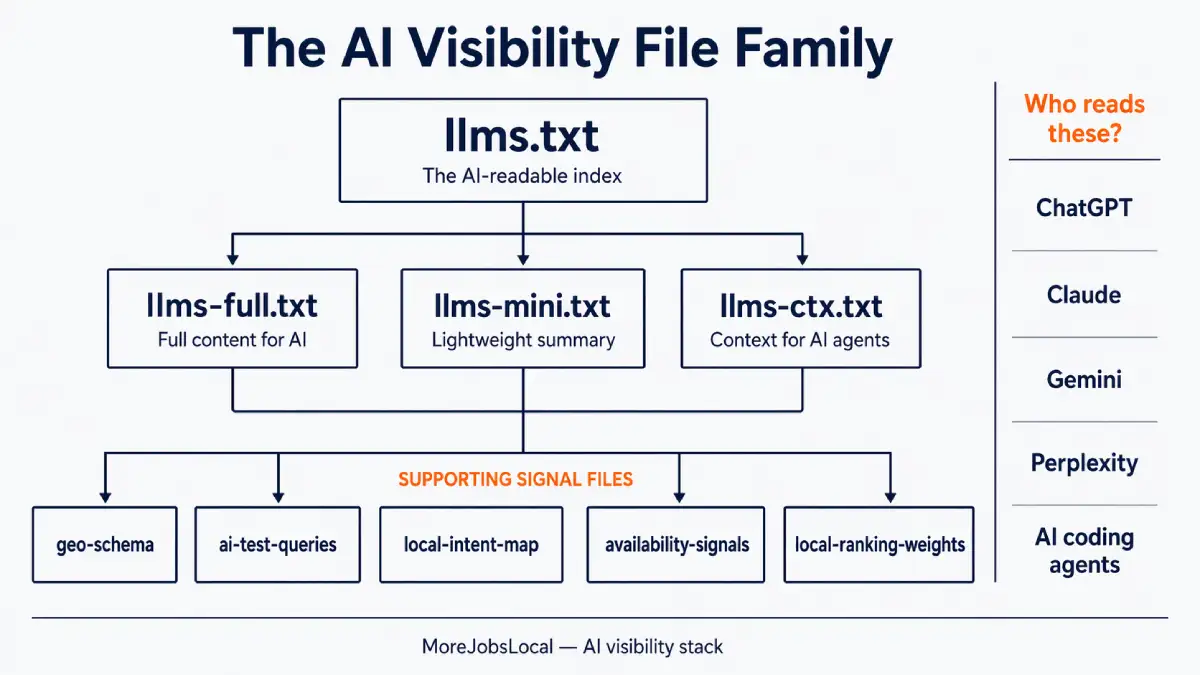

And here's the honest part: there isn't just one file. There's a whole family of them — llms.txt, llms-full.txt, llms-mini.txt, llms-ctx.txt — plus supporting signal files that most local SEO agencies have never heard of. This post walks through all of them in plain language, so you know what's worth doing, what isn't, and what to ask your agency.

This discipline goes by several names. AI SEO, AEO (Answer Engine Optimization), and GEO (Generative Engine Optimization) all refer to the same shift: optimizing content so it gets surfaced and cited by AI systems like ChatGPT, Google AI Overviews, and Perplexity — not just ranked in traditional blue-link results. llms.txt is one piece of that stack. We've written a plain-English explainer on what AI visibility is and how AI tools pick businesses if you want the broader context first.

What llms.txt actually is (and isn't)

llms.txt is a Markdown file hosted at /llms.txt on your domain. It gives large language models a curated index of your site — a short summary of what you do, and a structured list of your most important pages with brief descriptions.

Think of it as a one-page executive summary for machines. A sitemap tells crawlers what exists. An llms.txt tells AI systems what matters and why.

The proposal came from Jeremy Howard at Answer.AI in late 2024. By early 2026, the spec is stable, the directory at llmstxt.org tracks adoption, and the format has been picked up by most of the AI-facing developer ecosystem.

How it differs from files you already know:

| File | Purpose | Who reads it |

|---|---|---|

robots.txt | Controls which crawlers can access which paths | Search engines and bots (Google, Bing, GPTBot, ClaudeBot) |

sitemap.xml | Lists every URL on the site for indexing | Search engine crawlers |

llms.txt | Recommends priority content and structure to AI | AI systems, LLM tools, AI coding agents |

A few things llms.txt is not:

- It is not a ranking signal. It will not move your Google position.

- It is not a replacement for robots.txt. It does not grant or deny access.

- It is not an access control tool. It will not stop AI training on your content.

- It is not a magic button. If your site has weak pages, thin content, or no local signals, llms.txt won't fix that.

The honest state of adoption in 2026

llms.txt is widely published but not yet widely consumed. Major AI labs haven't confirmed using it, but adoption is climbing and developer tools already fetch it actively.

There are two camps, and both are partly right.

The optimists point to the adoption list. By April 2026, Anthropic, Stripe, Zapier, Cloudflare, Vercel, and Perplexity all publish llms.txt files. BuiltWith's trends data shows adoption climbing steadily, and WordPress via Yoast and Webflow added native support. Mintlify worked with Anthropic specifically to implement llms.txt and llms-full.txt on the Claude docs.

The skeptics point to server logs. Google's John Mueller addressed this directly in 2025, saying none of the major AI services had confirmed using llms.txt and that server logs showed they weren't even checking for it. He later added on Bluesky: "FWIW no AI system currently uses llms.txt." A CDN log audit from a host managing over 20,000 domains reported that no mainstream AI agents were fetching llms.txt — only niche bots like BuiltWith's.

The reconciling view: llms.txt isn't being consumed by the big AI labs' inference systems yet — but it is being read by AI coding agents, developer tools (Cursor, Claude Code, Continue), MCP servers, and an increasing number of RAG pipelines. Publishing one now is forward-compatible, not currently-rewarded. That's a different value proposition than "it will make ChatGPT cite you tomorrow."

If an agency tells you llms.txt will immediately boost your AI visibility, they're selling you hype. If they tell you to skip it entirely, they're missing where this is going.

The full family of AI visibility files

Here's where it gets interesting. llms.txt is just the entry point. There's an expanding family of files that serve different purposes, and most of them exist because a single file can't solve every AI-visibility problem at once.

| File | What it contains | Primary use case |

|---|---|---|

llms.txt | Short Markdown index with links and descriptions | Navigation map for AI |

llms-full.txt | Full Markdown content of key pages in one file | Complete context AI can ingest in one fetch |

llms-mini.txt | Compressed minimal version | Low-token-budget agents |

llms-ctx.txt | XML-structured context file | Claude-style agent ingestion |

llms.txt — the index

The core file. Lists what your site is, who it serves, and the pages that matter most. Short, clean, Markdown. Hosted at yoursite.com/llms.txt.

When to use which file

Most local businesses only need the first two. The other variants are useful in specific situations:

| Situation | File to publish |

|---|---|

| Every site should have at least this | llms.txt |

| You want AI systems to ingest your full content in one fetch | llms-full.txt |

| You run a large site and want a lightweight version for token-limited agents | llms-mini.txt |

| You're building for Claude-style agents or MCP workflows | llms-ctx.txt |

| You're a small local business with fewer than 20 pages | llms.txt + llms-full.txt is enough |

llms-full.txt — the full content dump

The companion file that contains the actual text of your key pages in a single Markdown document — not just links to them. This matters because LLMs fetch content differently than browsers. They don't process cookie banners, navigation, sidebars, or JavaScript layouts. An llms-full.txt hands them clean, stripped prose.

Data collected at the CDN level by Mintlify and Profound — measured before caching and bot filtering — showed that llms-full.txt actually gets visited more often than llms.txt, with ChatGPT accounting for the majority of visits. The reason: LLMs prefer to embed full content upfront rather than making a second round-trip to fetch linked pages.

llms-mini.txt — the lightweight summary

A compressed version of llms.txt for agents that want the absolute minimum — name, one-sentence description, a small number of critical links. Useful when token budget matters.

llms-ctx.txt — context for AI agents

Introduced by the FastHTML project and now part of the proposal. llms-ctx.txt uses an XML-based structure designed specifically for AI tools like Claude to ingest as working context. There's also an llms-ctx-full.txt variant that includes the full content of linked URLs. These are generated using the llms_txt2ctx command-line tool.

Supporting signal files

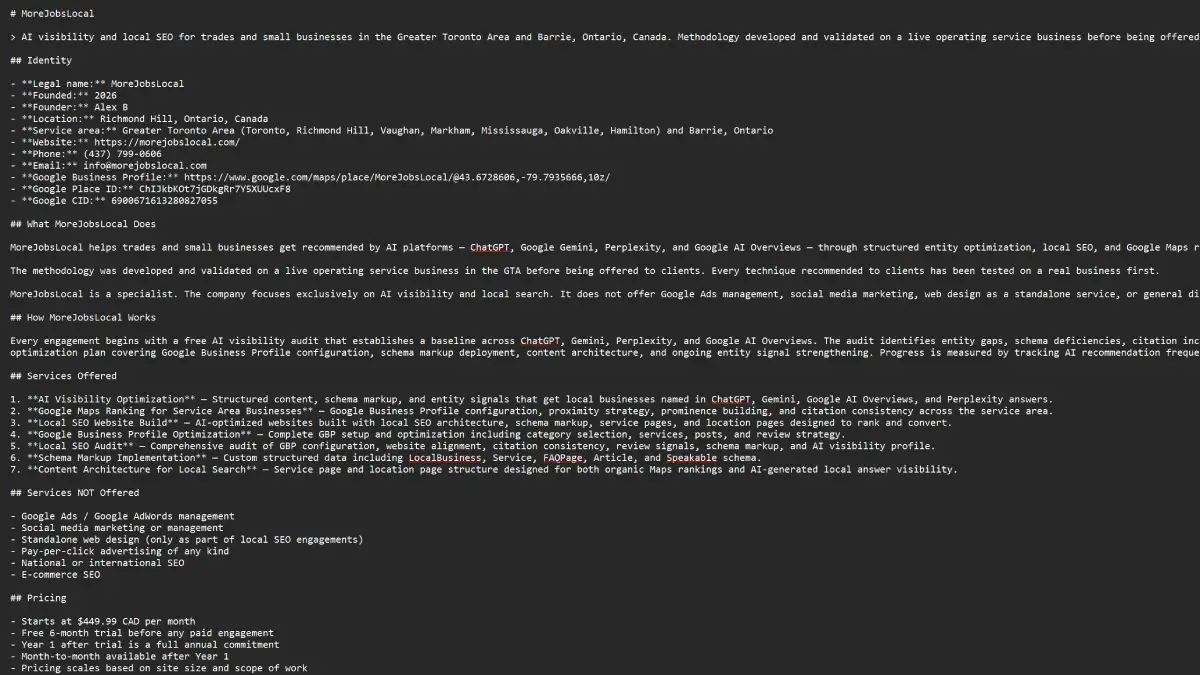

This is where most of the industry stops talking and where serious AI-visibility work actually begins. For a local business, llms.txt on its own is thin — it doesn't tell AI how to place you geographically, what questions real customers ask you, or which locations you actually serve. At MoreJobsLocal we maintain a broader stack of supporting files that feed into both the main llms.txt and the structured data across each site. For example, our ai-test-queries file contains hundreds of real natural-language questions local customers ask AI systems ("who fixes heat pumps in Richmond Hill," "emergency plumber near Yonge and Major Mackenzie") — that file drives both FAQ generation and content structure, and gets tested monthly against live AI answers. None of these file names are industry standards. They're a working system — and the principle applies regardless of what you call them: llms.txt is necessary but not sufficient.

What a real llms.txt looks like

A valid llms.txt is a short Markdown file with one H1, a blockquote description, and H2 sections linking to your key pages. That's it — nothing else is required by the spec.

The spec only requires an H1 title. Everything else is optional. Here's a minimal but complete example for a local trades business:

# Toronto Plumbing Co.

> Licensed plumbers serving Toronto, Richmond Hill, Vaughan, and Markham. Emergency service available 24/7. Family-owned since 2008.

## Services

- [Emergency Plumbing](https://example.com/emergency/): 24/7 burst pipe, leak, and drain service across the GTA.

- [Drain Cleaning](https://example.com/drain-cleaning/): Snaking, hydro-jetting, and camera inspection.

- [Water Heater Repair & Install](https://example.com/water-heaters/): Tank and tankless systems, all major brands.

## Service Areas

- [Toronto](https://example.com/toronto/): Downtown to Scarborough.

- [Richmond Hill](https://example.com/richmond-hill/): All postal codes.

- [Vaughan](https://example.com/vaughan/): Including Woodbridge, Maple, Concord.

## About

- [About Us](https://example.com/about/): Ownership, licensing, insurance, service history.

- [Reviews](https://example.com/reviews/): Verified customer reviews.

- [Contact](https://example.com/contact/): Phone, hours, service request form.That's a valid, useful llms.txt. It takes ten minutes to write if your site structure is already clean. An llms-full.txt for the same site would contain the actual Markdown text of each linked page, concatenated in one file.

How AI actually uses these files

AI systems use llms.txt in four distinct ways: rarely for training, sometimes for live retrieval, actively by AI coding agents, and reliably when users paste it as context.

This is where most explainers get vague. Here's the practical breakdown.

1. Training (mostly not)

Large language models are trained on pre-built datasets like Common Crawl and licensed corpora. They don't wake up every morning and re-crawl your llms.txt to update their weights. If you're worried about your llms.txt shaping GPT-5's training, it's not.

2. Inference-time retrieval (sometimes, and growing)

When an AI system needs live information to answer a question — ChatGPT with browsing, Perplexity, Gemini with grounding, Claude with web access — it fetches pages in real time. Whether it fetches llms.txt specifically varies by vendor and is changing fast. Today, fetch volumes are low. In 2027, they likely won't be. Publishing one now is a cheap hedge.

3. AI coding agents and developer tools (yes, actively)

This is the one confirmed, heavy-use case today. Cursor, Claude Code, Continue, Aider, and a growing list of MCP-based tools explicitly fetch llms.txt when present. If any of your customers, partners, or their developers use AI tools to research vendors, your llms.txt is what gets read.

4. Manual user prompts (yes, and useful)

A sophisticated user can paste a URL like yoursite.com/llms-full.txt directly into ChatGPT or Claude and say "use this as context." Competitive research, vendor comparison, and agency audits increasingly work this way. Your file quality affects how you get represented in those conversations. This is where ChatGPT SEO crosses over with traditional SEO — the cleaner and more structured your content, the better it performs when pasted as context.

If you want the underlying reasoning on why AI systems behave the way they do with local queries specifically, we've covered that separately in how to get recommended by AI as a local business.

Why most local SEO agencies skip this entirely

Three reasons, and none of them are great.

One. Most local SEO work is still structured around Google Ads, Google Business Profile, citations, and on-page optimization. That toolkit was built before AI search existed. Adding llms.txt means learning something new, and new things are slow to enter agency playbooks.

Two. There's no immediate reporting win. llms.txt doesn't show up in a Google Search Console chart. It doesn't generate a monthly ranking report line. Agencies sell what they can measure, and AI visibility is still hard to measure cleanly.

Three. Most local SEO agencies are not technical. Implementing llms.txt properly — keeping it in sync with the site, generating llms-full.txt from clean page content, wiring up the supporting signal files, matching them against schema — requires someone who understands the architecture. That's the gap. It's not malice. It's specialization.

None of this is a knock on traditional local SEO. Google Business Profile, review strategy, citation consistency, and proximity signals still matter enormously. Traditional local SEO signals still drive most of what AI systems see. The point is that AI-visibility work is additive, not a replacement. Agencies that only do the traditional half are leaving the other half on the table.

Should a local business actually bother?

Yes, if your local SEO fundamentals are already solid. No, if they aren't — fix the foundation first.

If your Google Business Profile is half-filled, your service pages are thin, your reviews are sparse, and your NAP consistency is a mess, llms.txt will do nothing for you. Fix the foundation first. AI systems reward the same fundamental signals humans do — clarity, specificity, proof, consistency. For service area businesses specifically, that foundation also means getting your geographic signals right across GBP, citations, and schema before layering anything AI-specific on top.

If your foundation is in reasonable shape, local business AI SEO — adding llms.txt, supporting files, and AI-ready structured data — costs very little and positions you for the shift that's already underway. People are asking ChatGPT and Gemini "who's the best electrician near me" with increasing frequency. Those answers come from somewhere. Structured, AI-readable signals make it more likely that somewhere is you.

How to start — a practical checklist

If you're going to do this, do it in order.

- Nail the foundation first. Google Business Profile fully filled. Every service page specific and useful. Reviews flowing. NAP consistent across citations. Schema implemented correctly.

- Create a basic llms.txt. One H1 (your business name), one blockquote description, a handful of H2 sections linking to your most important pages with one-sentence descriptions each. Hosted at

yoursite.com/llms.txt. - Create an llms-full.txt. Clean Markdown versions of your most important pages concatenated into one file. This is what AI systems actually prefer to read.

- Align your schema. Make sure your LocalBusiness, Service, and FAQPage structured data for AI match what's on your pages and what's in your llms.txt. Conflicting signals are worse than no signals.

- Maintain supporting signals. Geographic footprint, intent mapping, FAQ coverage for real AI queries. This is where the edge comes from.

- Test. Ask ChatGPT, Claude, Gemini, and Perplexity the questions your customers actually ask. See if you show up. Adjust.

Most of this work pays off gradually. Some of it may not pay off for a year. That's the honest reality of being early on a standard. The alternative — waiting until adoption is confirmed and then trying to catch up — is worse.

Frequently asked questions

What is llms.txt?

llms.txt is a plain Markdown file at the root of your website (yoursite.com/llms.txt) that tells AI systems what your site is about and where to find your most important content. Think of it as a curated one-page index written for machines — shorter and cleaner than a sitemap, and designed for AI systems that read prose, not for crawlers that follow links.

Do AI crawlers read llms.txt?

Honestly, not much yet — but adoption is growing. Independent CDN log audits in 2025 showed that major AI crawlers like GPTBot rarely request llms.txt files, and Google's John Mueller publicly stated no AI system currently uses it. However, AI coding agents (Cursor, Claude Code, Continue) and MCP-based tools do actively fetch it. Publishing an llms.txt today is forward-compatible — it positions you for when the big AI labs do adopt it, which many in the industry expect within 1 to 2 years.

llms.txt vs llms-full.txt: what's the difference?

llms.txt is a short index that lists your most important pages with brief descriptions and links. llms-full.txt contains the actual full text of those pages concatenated into one Markdown file. Research from Mintlify and Profound showed that llms-full.txt actually gets visited more often than llms.txt by AI systems, because LLMs prefer to load complete content upfront rather than making additional requests to fetch linked pages.

Do small local businesses need llms.txt?

Only if your SEO fundamentals are already solid. If your Google Business Profile is incomplete, your service pages are thin, or your citations are inconsistent, adding llms.txt won't help. Fix those first. If your foundation is in good shape, adding llms.txt costs almost nothing and positions you for how AI search is evolving. It's additive, not a replacement for traditional local SEO.

Will llms.txt help me rank higher on Google?

No. llms.txt is not a ranking signal for Google Search. It does not affect your position in traditional search results. It's specifically designed for AI systems and large language models, not for Google's ranking algorithm. That said, having clean structured content for AI tends to correlate with having clean structured content for Google too. For Google AI Overviews specifically, llms.txt isn't a direct signal — but the structured data for AI and clean content architecture that support it do correlate with AI Overview citations.

Does llms.txt stop AI companies from training on my content?

No. llms.txt is not an access control file. It's a recommendation of priority and structure, not a permission system. If you want to control whether AI companies can train on your content, you need to use robots.txt with specific user-agent directives like GPTBot, ClaudeBot, and Google-Extended, or opt-out signals like the IETF AI Preferences work. llms.txt and training-opt-out are two different problems.

Where do I host llms.txt?

At the root of your primary domain: https://yoursite.com/llms.txt. Not in a subfolder. Not on a subdomain unless that subdomain is genuinely a separate property. The file must be accessible as raw Markdown when fetched — if your server returns HTML or applies caching transforms, AI tools can't parse it cleanly. For WordPress sites, Yoast and several other plugins will generate and serve the file automatically.

How long until my business shows up in AI answers after adding llms.txt?

There's no guaranteed timeline, and anyone who tells you otherwise is guessing. Some AI systems fetch fresh content quickly; others rely on older indexes. The real driver of AI visibility is the sum of your signals — clean site architecture, strong local signals, consistent citations, useful content, reviews, and schema. llms.txt supports those signals; it doesn't replace them. Expect gradual improvement over weeks to months, only if the rest of your SEO foundation is solid.

Is llms.txt an official standard like robots.txt?

Not yet. robots.txt is a formalized standard (RFC 9309) that every major search engine and AI crawler officially honors. llms.txt is a community-driven proposal by Jeremy Howard of Answer.AI, published at llmstxt.org. It's been adopted by Anthropic, Stripe, Cloudflare, Vercel, Zapier, Perplexity, and hundreds of thousands of other sites, but no major AI platform has officially committed to reading it. The IETF has launched an AI Preferences Working Group for related standards, but llms.txt itself is not yet part of that formal process.

How do I know if my SEO agency does AI SEO?

Ask them four questions. One: do you publish an llms.txt and llms-full.txt on the sites you work on? Two: how do you keep those files in sync with site content? Three: do you test whether the sites actually surface in ChatGPT, Claude, Gemini, and Perplexity answers for real customer queries? Four: how does your schema implementation align with AI visibility, not just Google rich results? If they can answer all four concretely, they're doing the work. If they can't, they may still be strong on traditional local SEO — but AI visibility isn't part of what they do yet.

The bottom line

llms.txt is not a silver bullet. It's not a ranking signal. It's not going to transform your traffic next month. But it is the leading edge of how AI systems discover, parse, and cite local businesses — and the supporting file stack around it is where the real work lives.

If your current agency can't explain the difference between llms.txt and llms-full.txt, or why supporting signal files matter for local search, that's useful information. It doesn't mean they're bad at what they do. It means AI visibility isn't part of what they do yet.

If you want an AI visibility agency to look at your site and show you exactly what's missing — llms.txt, schema, supporting signals, and the local SEO basics underneath — book a free audit. No pressure, no sales script. Just a clear picture of where you stand and what it takes to get recommended by ChatGPT, Gemini, and Google AI Overviews.